A new study by a University of Puget Sound professor reveals that AI tools like ChatGPT may speak your language, but they are thinking with an “American mind.”

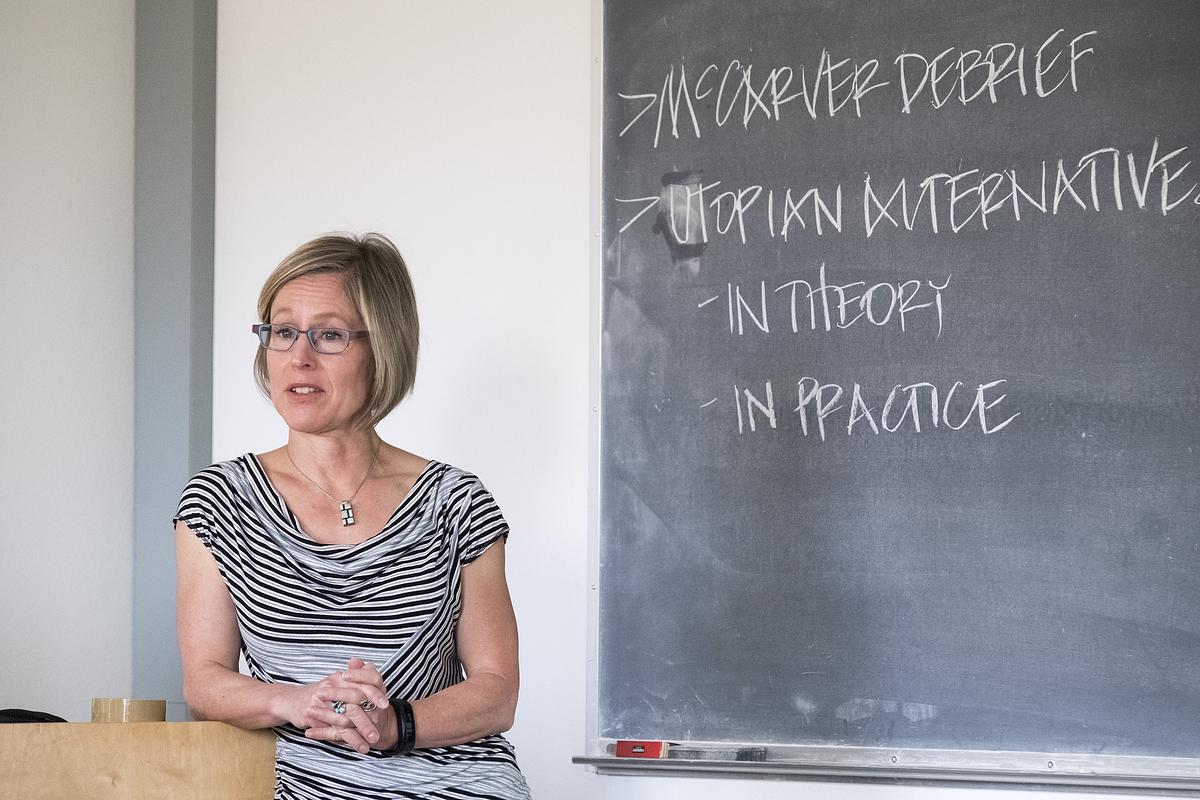

The research, “Epistemological Persistence in Multilingual AI: The Illusion of Locality in Large Language Models”, led by Distinguished Professor of Sociology and Anthropology Gareth Barkin, warns of a subtle cultural imperialism embedded in the world’s most popular AI models. Barkin began his research shortly after the release of ChatGPT.

“I was interested in the real social impact — how AI tools are perpetuating cultural pers ectives or ways of thinking in actual use cases,” Barkin said.

His findings suggest a massive data disparity. Despite being fluent in languages from Indonesian to Arabic, large language models (LLMs) are trained on trillions of data tokens that are, in some cases, nearly 90% English. Most of that data originates in the United States. This means the AI’s underlying logic, or its “common sense,” is rooted in Western, individualistic frameworks.

“They can speak a range of languages really well,” Barkin said. “But the core — the vast majority of the training data that plays a major role in shaping their core reasoning — is still English-language material.”

By drawing primarily on this culturally narrow dataset, AI risks providing responses that may be inappropriate to different social contexts. Ultimately, an AI that engages a wider range of human experiences becomes a more versatile and accurate tool, better equipped to navigate the complexities of global business and social relations.

Through a series of experiments, Barkin found that AI consistently reinterprets local cultural concepts through a Western framework. For example, he tested the Indonesian term malu, a complex sense of shame tied to social obligations. Rather than recognizing the web of communal responsibility involved, the AI misinterpreted it as simple personal embarrassment and offered individualistic advice on how to overcome the feeling. By prioritizing self-fulfillment over social obligation, the AI inadvertently provides guidance that could alienate users from their own communities or fail to resolve their issues. Barkin calls this consistent bias toward Western worldviews "epistemological flattening."

“There’s going to be increasingly one kind of common sense,” Barkin said. “The voice of AI is going to be a predominantly elite, secular, and American voice that's obscured by very effective translation layers.”

This is particularly concerning as users worldwide turn to AI for personal advice, professional support, and companionship. While a user might receive an answer in their native tongue, the logic behind that answer is often filtered through a WEIRD (Western, educated, industrialized, rich, and democratic) worldview. This epistemological flattening threatens to marginalize centuries of diverse cultural knowledge, replacing local wisdom and communal values with a single, standardized perspective.

“It is a new exercise of Western capital to shape the worldview of people in less wealthy parts of the world,” Barkin said. “Intentional or not, it’s a new vector for the colonization of the mind.”

Barkin’s work underscores a core conviction at Puget Sound that a deep understanding of AI requires a foundation in the liberal arts. This multidisciplinary approach allows researchers to look beyond the code to see the human impact.

“This research wouldn’t have been possible without an anthropological lens,” Barkin said. He explains that although tech companies are trying to make their models feel more local by adding layers of regional data and feedback, these efforts are often just a surface-level facade. The underlying reasoning engine is still an American creation, simply presented in a local language.

As Puget Sound prepares students for an AI-driven world, it remains committed to exploring technology through the lens of human values and intellectual curiosity. Currently, Barkin is part of the university’s AI working group, tasked with assessing the current state of AI at Puget Sound, identifying opportunities for its use in enhancing teaching, learning, scholarship, and administrative functions, and establishing ethical guidelines for its adoption.

“This is a time for people to join the conversation with their own disciplinary angles on AI,” Barkin said. “We need passionate faculty with diverse expertise to guide this exploration, ensuring our graduates are prepared to lead with the cultural intelligence and ethical grounding the future demands.”